The Architecture Behind ChatGPT and How Transformers Work in Deep Learning

A single mathematical breakthrough created ChatGPT. To understand how transformers work in deep learning, you need to first understand one equation, one architectural insight, and one paper from 2017.

A single mathematical breakthrough created ChatGPT.

Everything we see in modern AI today, traces back to one equation, one structural insight, and a single academic paper from 2017. That paper, "Attention Is All You Need," introduced the Transformer Architecture that five years later would power ChatGPT and trigger the largest shift in software since the smartphone.

This guide walks through how transformers work in deep learning with the actual math, the actual code, and the actual decisions you need to make when building one.

By the end, you will know how self-attention works, why multi-head attention matters, how positional encoding solves a hidden problem, and how the same block stacked 96 times became GPT.

Table of Contents

- The Problem Nobody Could Solve Before 2017

- How Self-Attention Works in Deep Learning

- Building Attention From Scratch

- Multi-Head Attention Explained

- Positional Encoding, the Quietly Critical Piece

- The Full Transformer Block

- From Transformer to GPT

- Practical Tips for Working With Transformers

- Why This Worked When Nothing Else Did

- Frequently Asked Questions

What Is a Transformer in Deep Learning?

A transformer is a neural network architecture introduced in 2017 that processes sequences using a mechanism called self-attention. Unlike older recurrent networks that read tokens one at a time, transformers process all tokens in parallel and let every position directly attend to every other position. This makes them faster to train, better at capturing long-range dependencies, and the foundation of modern large language models like ChatGPT, Claude, and Gemini.

Related Reading: A Deep Dive into Transformer Architecture and Self-Attention Mechanisms

The Problem Nobody Could Solve Before 2017

Before transformers, sequence modeling meant recurrent neural networks. An RNN reads one word at a time, updates a hidden state, then moves on. LSTMs and GRUs improved the basic idea by adding gating, but the fundamental shape stayed the same. Process token one. Update state. Process token two. Update state. Repeat.

This had two killer problems.

First, you cannot parallelize across the sequence. Token 47 depends on the hidden state from token 46, which depends on token 45, all the way back to token 1. Your GPU has 10,000 cores sitting idle while the network does sequential bookkeeping.

Second, long-range dependencies decay. If the subject of a sentence appears in word 3 and the verb appears in word 80, the gradient signal connecting them passes through 77 multiplications. Either the gradient explodes or it vanishes. In practice it vanishes.

People tried convolutional models like ByteNet and ConvS2S. Those parallelized better but needed deep stacks to capture long context. Nothing felt right.

How Do Transformers Work in Deep Learning?

Transformers work in deep learning through a sequence of seven core operations:

- Tokenization breaks input text into smaller units called tokens.

- Embedding converts each token into a high-dimensional vector.

- Positional encoding adds information about each token's position in the sequence.

- Self-attention computes how much each token should attend to every other token.

- Multi-head attention runs several attention operations in parallel to capture different relationships.

- Feed-forward layers transform each position independently to add representational depth.

- Output projection converts the final vectors back into token probabilities for prediction.

The same block of self-attention plus feed-forward layers is stacked dozens of times, with each layer refining the representation built by the layer below it.

How Self-Attention Works in Deep Learning

Self-attention in one sentence. Self-attention is a mechanism that computes a weighted average of all positions in a sequence for each position, where the weights are learned based on how relevant each position is to every other position.

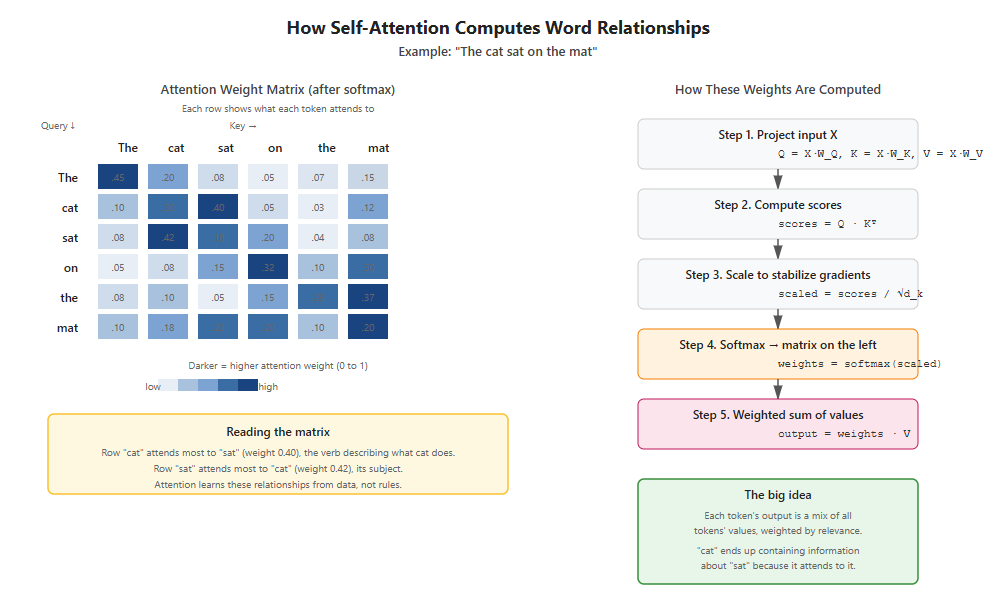

The transformer's central trick is a function called scaled dot-product attention. Given a sequence of vectors, attention lets every position look at every other position in a single matrix multiplication. No recurrence. No depth required to span distance. One operation.

Here is the math.

Attention(Q, K, V) = softmax(Q · Kᵀ / √dₖ) · V

Three matrices show up. Q is queries, K is keys, V is values. Each is shaped (n, d) where n is sequence length and d is the embedding dimension.

Read this like a database lookup. Every token issues a query asking "what am I looking for?" Every token publishes a key answering "what do I represent?" The dot product Q · Kᵀ measures how well each query matches each key. Softmax turns those scores into a probability distribution over positions. Then you take a weighted average of the values V using those probabilities.

The √dₖ divisor matters more than it looks. For large dₖ, dot products grow in magnitude, pushing softmax into regions with vanishing gradients. Dividing by √dₖ keeps the variance of the scores at roughly 1 regardless of dimension. This is one of those tiny details you can stare at for a year before noticing it broke your training run.

The example above makes this concrete. When attention processes "The cat sat on the mat," the word "cat" learns to attend strongly to "sat" because they share a subject-verb relationship. No one programmed this. The model discovered it by adjusting Q and K projection matrices during training. Every transformer layer in every modern LLM works this way.

The same attention mechanism powers vision models too, though it brings its own failure modes when applied to images, as covered in our deep dive on why vision transformers dump garbage into random pixels.

Building Attention From Scratch

Let me show you what this looks like in code. We will build attention in PyTorch with no shortcuts.

import torch

import torch.nn as nn

import torch.nn.functional as F

import math

class ScaledDotProductAttention(nn.Module):

def __init__(self, d_model, d_k):

super().__init__()

self.W_q = nn.Linear(d_model, d_k, bias=False)

self.W_k = nn.Linear(d_model, d_k, bias=False)

self.W_v = nn.Linear(d_model, d_k, bias=False)

self.d_k = d_k

def forward(self, x, mask=None):

Q = self.W_q(x)

K = self.W_k(x)

V = self.W_v(x)

scores = Q @ K.transpose(-2, -1) / math.sqrt(self.d_k)

if mask is not None:

scores = scores.masked_fill(mask == 0, float('-inf'))

weights = F.softmax(scores, dim=-1)

output = weights @ V

return output, weights

Notice three details that matter in production.

The bias is set to False on the linear projections. Bias terms in attention projections are usually a waste of parameters and sometimes hurt training stability. The original paper omits them and so should you.

The mask uses negative infinity rather than zero. If you mask scores to zero before softmax, those positions still receive non-zero probability after softmax. Setting them to negative infinity makes their post-softmax weight exactly zero.

The weights tensor is returned alongside the output. When you debug a transformer, attention weight visualizations are your best friend. Always make them accessible.

Multi-Head Attention Explained

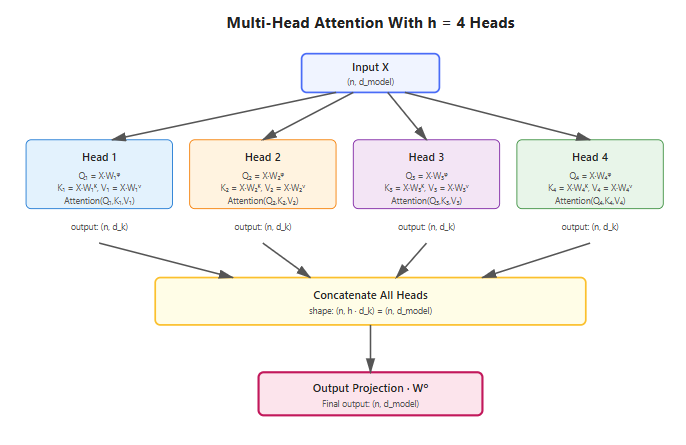

A single attention operation captures one type of relationship. You probably want many. Multi-head attention runs h attention operations in parallel, each with its own W_q, W_k, W_v, then concatenates the results.

MultiHead(Q, K, V) = Concat(head₁, head₂, ..., headₕ) · Wᴼ

where headᵢ = Attention(Q · Wᵢᵠ, K · Wᵢᴷ, V · Wᵢⱽ)

Each head operates in a smaller subspace of size dₖ = d_model / h. Total compute stays roughly constant compared to single-head attention with full dimension. You get diversity for free.

In PyTorch, the implementation uses a clever trick. Instead of allocating h separate small projection matrices, you allocate one large matrix and reshape its output into h heads. The math is identical, the GPU runs faster, and the code is shorter.

class MultiHeadAttention(nn.Module):

def __init__(self, d_model, num_heads):

super().__init__()

assert d_model % num_heads == 0

self.d_model = d_model

self.num_heads = num_heads

self.d_k = d_model // num_heads

self.W_q = nn.Linear(d_model, d_model, bias=False)

self.W_k = nn.Linear(d_model, d_model, bias=False)

self.W_v = nn.Linear(d_model, d_model, bias=False)

self.W_o = nn.Linear(d_model, d_model)

def forward(self, x, mask=None):

batch_size, seq_len, _ = x.shape

Q = self.W_q(x).view(batch_size, seq_len, self.num_heads, self.d_k).transpose(1, 2)

K = self.W_k(x).view(batch_size, seq_len, self.num_heads, self.d_k).transpose(1, 2)

V = self.W_v(x).view(batch_size, seq_len, self.num_heads, self.d_k).transpose(1, 2)

scores = Q @ K.transpose(-2, -1) / math.sqrt(self.d_k)

if mask is not None:

scores = scores.masked_fill(mask == 0, float('-inf'))

weights = F.softmax(scores, dim=-1)

attended = weights @ V

attended = attended.transpose(1, 2).contiguous().view(batch_size, seq_len, self.d_model)

return self.W_o(attended)

Practical tip. When you set up multi-head attention, allocate one big projection matrix and reshape, rather than h separate small projections. This is what every production codebase does. The math is identical and the GPU runs faster because one large matmul beats many small ones.

What do these heads actually learn? Researchers have probed this extensively. Some heads track syntactic dependencies like subject-verb agreement. Some track positional patterns like "previous token" or "first token of sentence." Some track semantic roles. You rarely need to engineer this. Gradient descent finds useful divisions of labor on its own.

Positional Encoding, the Quietly Critical Piece

Attention has a strange property. It is permutation invariant. Shuffle the input tokens and the output tokens get shuffled the same way, but the relationships are computed identically. That means raw self-attention has no idea about word order, and any honest answer to how transformers work in deep learning has to address this.

You fix this by adding positional information to the input embeddings before attention sees them. The original transformer used sinusoidal positions.

PE(pos, 2i) = sin(pos / 10000^(2i / d_model))

PE(pos, 2i+1) = cos(pos / 10000^(2i / d_model))

Each position gets a unique vector. Adjacent positions get similar vectors. Distant positions get dissimilar vectors. The 10000 base means the wavelengths span from 2π to 10000 · 2π, covering both fine-grained and coarse-grained position information.

Modern models like GPT-3 and Llama use different schemes. Learned positional embeddings, rotary positional embeddings (RoPE), and ALiBi all show up in production. RoPE in particular has become the default for any model that needs to extrapolate to longer contexts than it trained on. If you are building something today, start with RoPE.

The Full Transformer Block

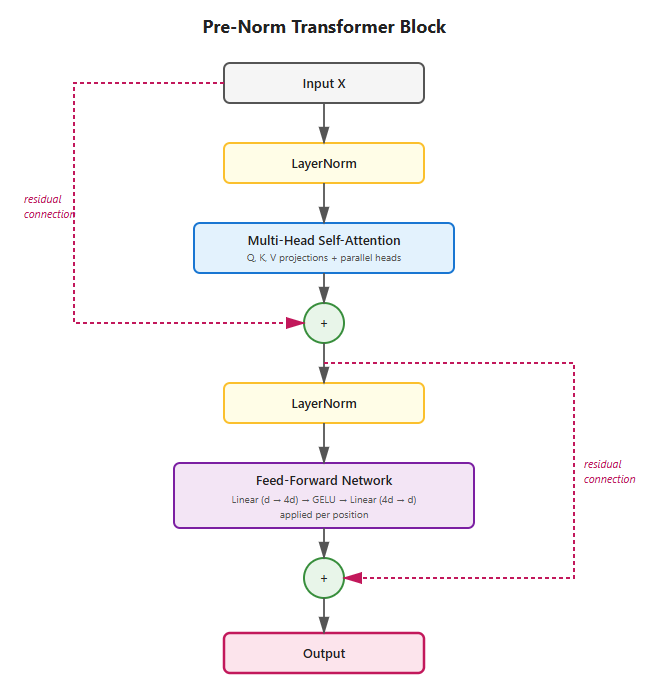

Stack a multi-head attention layer, a feed-forward network, and two residual connections with layer normalization, and you have a transformer block.

The diagram shows the pre-norm variant, which is what every modern model uses. The two dashed red lines are residual connections that let gradients flow directly from output back to input, which is what makes deep stacks of these blocks trainable in the first place.

Here is the code.

class TransformerBlock(nn.Module):

def __init__(self, d_model, num_heads, d_ff, dropout=0.1):

super().__init__()

self.attention = MultiHeadAttention(d_model, num_heads)

self.norm1 = nn.LayerNorm(d_model)

self.norm2 = nn.LayerNorm(d_model)

self.ff = nn.Sequential(

nn.Linear(d_model, d_ff),

nn.GELU(),

nn.Linear(d_ff, d_model)

)

self.dropout = nn.Dropout(dropout)

def forward(self, x, mask=None):

# Pre-norm formulation

attended = self.attention(self.norm1(x), mask)

x = x + self.dropout(attended)

ff_out = self.ff(self.norm2(x))

x = x + self.dropout(ff_out)

return x

A few production-grade decisions hide in this block.

Pre-norm versus post-norm is a real choice. The original paper put LayerNorm after the residual addition. Modern models put it before. Pre-norm trains more stably at scale, especially without learning rate warmup. If you are training anything bigger than a toy, use pre-norm.

The feed-forward dimension d_ff is typically 4 times d_model. This ratio shows up in BERT, GPT-2, GPT-3, Llama, and almost everything else. It is not magic. It is just where the compute-to-quality curve flattens.

GELU beats ReLU in transformers. Most current models use SwiGLU or similar gated variants for an extra few points of quality at modest cost. If you want to be modern, use SwiGLU.

From Transformer to GPT

The original 2017 transformer had two halves. An encoder for reading and a decoder for generating. BERT in 2018 kept only the encoder. GPT in 2018 kept only the decoder. The key difference between encoder and decoder is the attention mask.

A decoder uses causal masking. Token i can attend to tokens 1 through i but not to tokens i+1 and beyond. This is what makes generation possible. You train the model to predict the next token given all previous tokens, in parallel for every position in the sequence.

def causal_mask(seq_len, device):

mask = torch.tril(torch.ones(seq_len, seq_len, device=device))

return mask.unsqueeze(0).unsqueeze(0)

This single function, applied inside the attention mechanism, is the difference between BERT and GPT. Stack 96 such blocks, train on a few hundred billion tokens of internet text, fine-tune with reinforcement learning from human feedback, and you get ChatGPT.

Once you have a model this powerful, deploying it raises hard questions about responsibility, which we explored in our piece on who takes the blame when AI agents mess up.

The architectural simplicity is almost insulting. The same block, repeated. The same attention, repeated. No recurrence, no convolution, no fancy memory module. The breakthrough was realizing that scale plus this one mathematical operation could replace decades of architectural complexity.

Practical Tips for Working With Transformers

Here are things that took the field years to learn and that you can adopt for free.

Initialize carefully. The standard transformer initialization scales weights by 1 / √d for a reason. If you deviate, do the math first. Bad initialization in deep transformers shows up as catastrophic loss explosions in the first 100 steps.

Watch your gradient norms. If your gradient norm regularly exceeds 1.0 during stable training, you have an issue with either learning rate or initialization. Modern training runs clip gradients at 1.0 routinely.

Transformer Hyperparameters at Common Scales

| Model Class | Parameters | d_model | num_heads | num_layers | Context Length |

|---|---|---|---|---|---|

| Tiny (educational) | ~10M | 256 | 4 | 6 | 512 |

| Small (BERT-base) | 110M | 768 | 12 | 12 | 512 |

| Medium (GPT-2 medium) | 355M | 1024 | 16 | 24 | 1024 |

| Large (GPT-2 XL) | 1.5B | 1600 | 25 | 48 | 1024 |

| XL (Llama 2 7B) | 7B | 4096 | 32 | 32 | 4096 |

| Huge (Llama 2 70B) | 70B | 8192 | 64 | 80 | 4096 |

| Frontier (GPT-4 estimated) | ~1.7T | unknown | unknown | unknown | 128k |

These numbers are not arbitrary. They follow scaling laws first articulated by Kaplan et al. in 2020 and refined by the Chinchilla paper in 2022. The relationship between model size, dataset size, and compute budget is now well understood enough that you can predict the loss of a model from its parameter count and training tokens.

Use mixed precision. BF16 weights and activations, FP32 master weights, FP32 optimizer state. This is the default for any non-trivial transformer. Pure FP32 wastes memory and bandwidth. Pure FP16 underflows in the loss.

Profile your attention. For sequences over a few thousand tokens, attention's quadratic memory becomes the bottleneck. FlashAttention rewrites the operation to use a fraction of the memory by tiling the computation. Use it through PyTorch's scaled_dot_product_attention function or directly via the flash-attn package.

Think about memory architecture early. Standard transformers have no persistent memory beyond their context window, which is why memory-augmented architectures are an active research direction. We covered Google's Titans approach in our explainer on permanent digital memory and explored what unlimited memory looks like in practice in the Claude 4 memory experiment.

Tie input and output embeddings. The matrix that turns tokens into vectors and the matrix that turns vectors back into token logits are the same shape. Sharing them saves parameters and tends to improve quality. GPT-2 does this. Most subsequent models do too.

Mind your tokenizer. The tokenizer is part of the model in every meaningful sense. Byte-pair encoding (BPE) and SentencePiece are the standards. Build your tokenizer on data that resembles your target domain. A general-purpose BPE trained on web text will fragment specialized notation like SMILES strings or LaTeX in painful ways.

Validate on held-out perplexity, not just loss. Loss curves can look great while the model overfits something stupid. Track perplexity on a clean validation set every 1000 steps.

Save optimizer state, not just model weights. AdamW carries first and second moment estimates that took millions of steps to build up. Losing them on a training restart costs you days.

Why This Worked When Nothing Else Did

Before transformers, the field cycled through three main architectural families for sequence modeling. RNNs (and their gated variants LSTM and GRU). CNNs (causal convolutions for sequences). And the transformer, which arrived in 2017 and replaced both within five years. Here is how they compare on the dimensions that actually matter.

Quick Comparison: Transformer vs RNN vs CNN

| Property | RNN / LSTM | 1D CNN | Transformer |

|---|---|---|---|

| Parallelism | None | Full within layer | Full across sequence |

| Long-range dependencies | Weak | Moderate | Strong |

| Memory complexity | O(n·d) | O(n·d·k) | O(n²·d) |

| Training speed | Slow | Fast | Very fast |

| Best for today | Legacy | Some audio | Dominant in NLP, vision |

Below is the full comparison with implementation details.

Transformer vs RNN vs CNN: Architecture Comparison

| Property | RNN / LSTM | 1D CNN | Transformer |

|---|---|---|---|

| Year of dominance | 2014 to 2017 | 2016 to 2017 | 2017 to present |

| Parallelism across sequence | None, strictly sequential | Full within layer | Full across full sequence |

| Path length between distant tokens | O(n) | O(log n) with dilated convs | O(1) |

| Long-range dependencies | Weak, gradients vanish past ~100 tokens | Moderate, limited by receptive field | Strong, every token sees every token |

| Memory complexity per layer | O(n · d) | O(n · d · k) where k is kernel size | O(n² · d) for vanilla attention |

| Compute per layer | O(n · d²) | O(n · d² · k) | O(n² · d) for attention + O(n · d²) for FFN |

| GPU utilization during training | Poor, sequential bottleneck | Excellent | Excellent |

| Variable sequence length handling | Native | Requires padding or masking | Requires padding or masking |

| Inductive bias | Recency bias | Locality bias | None, fully learned |

| Pretraining at scale | Limited success | Limited success | Foundation of all modern LLMs |

| Inference latency for generation | Fast per token, sequential | Fast | Slow without KV caching, fast with it |

| Best use today | Legacy systems, time series in some niches | Some audio and biosignal tasks | Dominant for language, vision, multimodal |

| Example models | LSTM seq2seq, ELMo | WaveNet, ByteNet | GPT, BERT, Llama, Claude, Gemini |

The transformer wins on most dimensions but does have one real weakness. Its memory complexity is quadratic in sequence length, which is why scaling context windows past 100,000 tokens requires architectural tricks like sliding window attention, Mistral-style sparse attention, or full algorithmic reformulations like FlashAttention.

The transformer combined three properties that nothing before had simultaneously achieved. Full parallelism across the sequence, since attention sees everything at once. Constant path length between any two positions, since attention reaches across the whole sequence in one operation. And a smooth optimization surface, since the architecture is essentially just stacked matrix multiplications with normalization.

These three properties matter most when you scale up. RNNs run into wall-clock training time. CNNs run into receptive field limits. Transformers run into memory, but memory is a problem you can throw money at, and the field has spent the last seven years throwing money at it with stunning returns.

Understanding how transformers work in deep learning is no longer optional for anyone serious about machine learning. The architecture has eaten natural language processing, made significant inroads into computer vision through Vision Transformers, conquered protein structure prediction in AlphaFold 2, and is now spreading into robotics and time series forecasting. Whatever you build next, there is a good chance a transformer will sit somewhere in the stack. The geographic distribution of who actually benefits from this technology is uneven, as we documented in our analysis of the global AI adoption map.

The 15-page paper from 2017 was titled "Attention Is All You Need." Almost a decade later, the title still holds.

Frequently Asked Questions

What is a transformer in deep learning?

A transformer is a neural network architecture introduced in 2017 that uses self-attention to process sequences in parallel rather than one element at a time. It replaced recurrent networks as the dominant approach for language, vision, and many other tasks because it scales better and captures long-range dependencies more effectively.

How does a transformer model work?

A transformer model works by converting input tokens into vectors, adding positional information, then passing them through stacked blocks of self-attention and feed-forward layers. Self-attention lets every token directly look at every other token in one operation. The same block is repeated dozens of times to refine the representation at each layer.

How does self-attention work?

Self-attention computes three projections of every input vector called queries, keys, and values. Each query is compared against every key using a dot product, the resulting scores are normalized with softmax, and the values are averaged using those weights. The result is that every position can directly attend to every other position.

Why are transformers better than RNNs?

Transformers parallelize across the entire sequence, so a GPU can process all tokens at once. They also create direct connections between any two positions regardless of distance, which solves the vanishing gradient problem that plagued RNNs on long sequences. The combination makes transformers train faster and learn longer dependencies.

What is multi-head attention?

Multi-head attention runs several attention operations in parallel, each in a smaller subspace, then concatenates the results. Different heads learn to track different relationships, such as syntactic dependencies, positional patterns, or semantic roles, without any explicit supervision. The architecture gets diversity for free at roughly the same compute cost as single-head attention.

Is ChatGPT a transformer?

Yes. ChatGPT is built on the GPT family of models, which are decoder-only transformers trained to predict the next token in a sequence. The base model is then fine-tuned with reinforcement learning from human feedback to make its outputs more useful and aligned with what users want. For a hands-on comparison of how different GPT-based tools behave in practice, see our 30-day test of Ideogram vs ChatGPT for logo generation.

Who invented the transformer?

The transformer was invented by a team of eight researchers at Google Brain and Google Research who published the paper "Attention Is All You Need" in June 2017. The authors were Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Lukasz Kaiser, and Illia Polosukhin.

What is the difference between an encoder and a decoder transformer?

An encoder transformer reads an entire input sequence at once and produces contextual representations, used in models like BERT. A decoder transformer generates output tokens one at a time using causal masking, where each token can only attend to previous tokens. GPT models are decoder-only, while the original transformer used both an encoder and a decoder.

Why is it called attention?

It is called attention because the mechanism mimics how humans focus on specific parts of input while ignoring others. When you read a sentence, your mind selectively focuses on words that are relevant to understanding meaning. The attention mechanism does the same thing mathematically, weighting some tokens more heavily than others when computing each output.

What does the Q K V mean in transformers?

Q stands for queries, K for keys, and V for values. These three matrices are computed from the input by multiplying with learned weight matrices. Queries ask "what am I looking for," keys answer "what do I represent," and values provide the actual information to be aggregated. This naming follows the database lookup analogy.

What is positional encoding in transformers?

Positional encoding adds information about each token's position in the sequence to its embedding before attention sees it. Without positional encoding, the transformer would treat input as an unordered set since attention is permutation invariant. The original paper used sinusoidal functions, while modern models like Llama use rotary positional embeddings (RoPE).

What is the attention is all you need paper?

"Attention Is All You Need" is the 2017 paper by eight Google researchers that introduced the transformer architecture. It demonstrated that an architecture using only attention mechanisms, with no recurrence or convolutions, could outperform existing approaches on machine translation while training faster. The paper has since become one of the most cited works in machine learning.

How many parameters does GPT-4 have?

OpenAI has not officially disclosed GPT-4's parameter count. Public estimates and leaked information suggest GPT-4 uses a mixture-of-experts architecture with around 1.7 trillion total parameters across multiple expert networks, though only a subset activates per token. GPT-3, by comparison, has 175 billion parameters in a dense architecture.

How long does it take to train a transformer?

A small transformer with a few million parameters trains on a single GPU in hours. A research-grade model with hundreds of millions of parameters takes days on a multi-GPU server. Frontier models like GPT-4 require thousands of GPUs running for months and cost tens of millions of dollars in compute.

Can transformers be used for images?

Yes. Vision Transformers (ViT) split images into patches, treat each patch as a token, and process them with a standard transformer. Vision Transformers now match or exceed convolutional networks on most image classification benchmarks. However, they have known failure modes, as we documented in our piece on why vision transformers dump garbage into random pixels. Models like CLIP and Stable Diffusion use transformers for both vision and language tasks simultaneously.

What is the difference between a transformer and a neural network?

A transformer is a specific type of neural network architecture. All transformers are neural networks, but not all neural networks are transformers. The transformer is defined by its use of self-attention layers and the lack of recurrence or convolution. Other neural network architectures include CNNs for vision and RNNs for older sequence tasks.

What is BERT and how is it different from GPT?

BERT is a bidirectional encoder transformer trained to predict masked words in a sentence using context from both directions. GPT is a decoder-only transformer trained to predict the next word using only previous context. BERT excels at understanding tasks like classification, while GPT excels at generation tasks like writing and conversation.

Are transformers still the best architecture in 2026?

Transformers remain the dominant architecture in 2026 across language, vision, and multimodal tasks. Some alternatives like state-space models (Mamba) and hybrid memory-augmented architectures show promise for specific workloads, especially long-context tasks. Google's Titans approach is one of the most interesting recent developments in this direction, which we covered in detail in our explainer on permanent digital memory. Still, the transformer's combination of parallelism, scalability, and tooling support keeps it at the center of frontier AI research and production deployment.